The End of Single-Modal AI: Why Everything is Becoming Multimodal

Introduction

For years, artificial intelligence evolved in silos.

Text models wrote content.

Image models generated visuals.

Audio models handled speech.

Each system was powerful but isolated.

That era is ending.

We are now entering a new phase of AI where systems no longer think in a single format. Instead, they understand and generate across multiple modalities text, images, video, and audio simultaneously.

This is the rise of multimodal AI, and it is quickly becoming the default standard for modern AI systems.

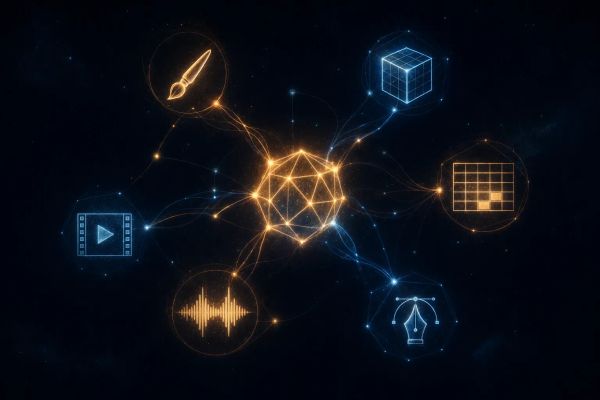

What is Multimodal AI?

Multimodal AI refers to systems that can process and generate multiple types of data at once.

Instead of just responding with text, these systems can:

- Understand an image and describe it

- Generate visuals from text prompts

- Convert voice into structured insights

- Combine multiple inputs into a unified output

In simple terms:

👉 Multimodal AI doesn’t just “read” or “write” it perceives and creates across formats.

Why Single-Modal AI is Becoming Obsolete

Single-modal systems are limited by design.

A text-only model cannot:

- Generate visual assets

- Understand design context

- Create rich media experiences

Similarly, image-only systems lack:

- reasoning depth

- structured communication

- workflow integration

Modern use cases demand combined intelligence:

- Marketing needs copy + visuals

- Product teams need UI + content

- Developers need logic + assets

This is why multimodal AI is not just an upgrade it’s a paradigm shift.

Real-World Applications of Multimodal AI

1. Design & Creative Workflows

Instead of switching between tools, creators can:

- Describe a design → generate images instantly

- Refine visuals using natural language

- Maintain brand consistency across assets

2. Marketing & Content Creation

Campaign creation is becoming end-to-end:

- Generate ad copy

- Create supporting visuals

- Adapt content for different platforms

3. Developer Productivity

Developers can now:

- Generate UI components from descriptions

- Create assets alongside code

- Build faster with integrated AI workflows

4. Business Automation

AI systems can:

- Analyze documents + visuals together

- Generate reports with charts and summaries

- Automate multi-step processes

The Shift: From Tools to Systems

The biggest transformation is not just capability it’s integration.

Earlier:

- You used separate tools for each task

Now:

- One AI system handles the entire workflow

This shift moves AI from being a toolset to becoming a system layer across products.

How Multimodal AI Powers Modern Products

Modern AI products are increasingly being built around multimodal capabilities.

A strong example of this shift is how platforms like Vanikya AI approach content and asset generation.

Instead of treating image generation as a separate feature, it becomes part of a larger workflow:

- Input: A simple business prompt

- Output: Visual assets, content, and insights together

For example:

- A small business owner can generate product visuals

- A marketer can create campaign creatives

- A founder can build branded assets without design tools

This reduces friction dramatically.

The result is not just faster creation but entirely new workflows that were not possible before.

A Practical Example: Image Generation Flow

Let’s break down a simple multimodal workflow:

- User provides a prompt

→ “Create a modern product banner for a skincare brand” - AI interprets intent

→ understands tone, style, and use case - AI generates:

- Visual asset (image)

- Supporting text (headline, tagline)

- Variations for different formats

- User refines with feedback

→ “Make it more minimal and premium”

This loop is:

- fast

- intuitive

- fully integrated

That’s the real power of multimodal AI.

Why This Matters for the Future

Multimodal AI is not just a feature it’s becoming the foundation of AI systems.

In the coming years, we’ll see:

- Fewer standalone AI tools

- More integrated AI platforms

- Seamless human-AI collaboration across formats

The interface of AI is evolving from:

👉 Prompt → Response

to

👉 Intent → Execution

Final Thoughts

We are moving beyond the era of single-purpose AI models.

The future belongs to systems that can:

- understand context

- operate across modalities

- execute complete workflows

Multimodal AI is not just improving how we use AI it is redefining what AI can do.

And as this becomes the default, the gap between idea and execution will continue to shrink.

Explore Multimodal AI in Action

If you’re interested in seeing how multimodal workflows can power real-world use cases, you can explore: